[Draft] How Should I Use AI as a College Student? — A Science-Backed Guide for CS Students

Many of my students come to me with this wonderful question: ”How can I leverage AI as a tool to supercharge my education without accidentally outsourcing my own intelligence?” In my opinion, this will fundamentally impact how much the current generation of college students will take out of their educational experience. So I decided to write my advice down in a succinct, evidence-based post for everyone.

But first a disclaimer: AI is evolving at rapid speed, and we still lack replicated, long-term, larger-scale data on its impact. What follows is my personal perspective, backed by the best available, still early, research I could find. Please take it as a guide, not gospel.

Motivation: Maximize your Learning Because AI is a Skill Amplifier

It’s 7:00 PM on a Friday.

Your friends want to go watch a movie.

But you’re sitting here debugging your C++ program that is throwing a cryptic segmentation fault.

You have stared at the same while loop for twenty minutes, and the temptation to paste the entire file into an LLM with the prompt “fix this” is overwhelming.

This is what the real professionals would do, so why shouldn’t you?

Because you would rob your brain of the exact friction it needs to become a skilled software engineer.

State-of-the-art research on real-world tasks shows that AI is an amplifier of technical skills, not an equalizer (DORA 2025; Paradis et al. 2025; Ma et al. 2026; Prather et al. 2024). Recent research by Google shows that developers with stronger coding foundations and deeper system design experience achieve a significantly larger productivity boost from AI tools (Paradis et al. 2025). In professional settings, AI magnifies the existing strengths of high-performing individuals and teams, while simultaneously amplifying the dysfunctions of struggling ones (DORA 2025). Studies conducted in educational settings show similar results: Experienced developers can use their deep knowledge of fundamentals (algorithms, data structures, and syntax) to anticipate edge cases, rapidly scan and comprehend AI outputs, spot subtle issues, and identify hallucinations to supercharge their workflows (Prather et al. 2024; Ma et al. 2026). Methodologically, experts engage with GenAI proactively to plan, steer, and verify, whereas novices tend to apply it reactively merely to bypass immediate roadblocks (Ma et al. 2026; Prather et al. 2024; Dohmke 2025; Shen and Tamkin 2026; Huang et al. 2025). Ultimately, AI enables skilled developers to compound their knowledge and productivity while novices who are not developing these skills fall further and further behind (Lodge and Loble 2026).

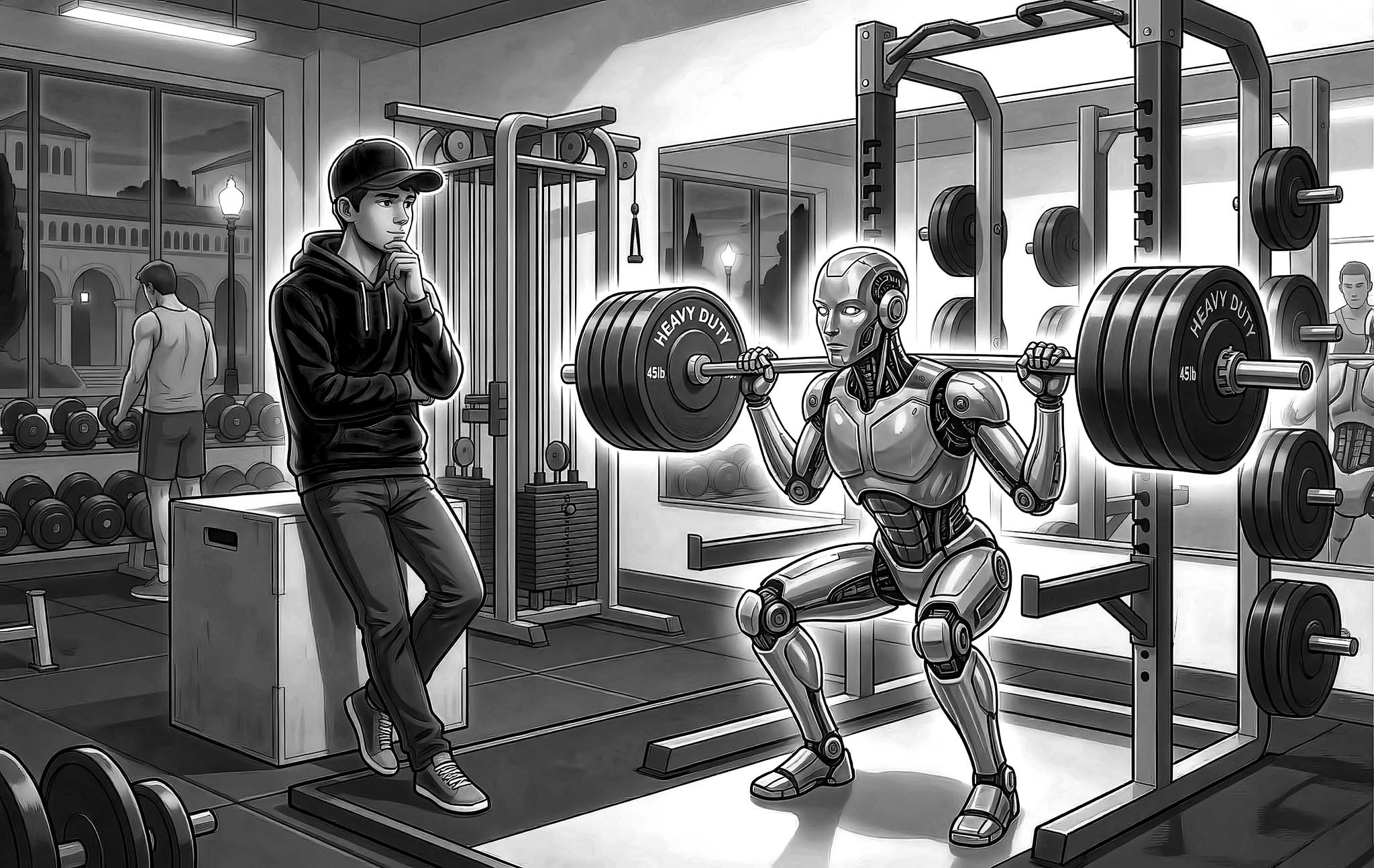

This means that as a college student, your main goal should be to maximize your skills so that, when you then add AI on top, you amplify a larger base of skills and keep compounding. Unfortunately, AI as a technology often incentivizes behavior that reduces skill formation, if used inappropriately (Yan et al. 2024; Bastani et al. 2025). To use an analogy: Using AI to do the heavy lifting in your coursework is like sending a robot to the gym instead of working out yourself.

Just like a physical workout is only effective if it is strenuous enough to challenge your muscles, learning is only effective if it challenges your mind via “desirable difficulties” (Bjork and Bjork 2011; Bjork and Bjork 2020; Brown et al. 2014). Learn more about desirable difficulties and their importance for learning in my previous blog post “Evidence-Based Study Tips for College Students”.

On the other hand, if used correctly, AI has the potential to rapidly accelerate the learning journey of students who use AI to remove undesirable difficulties while increasing desirable difficulties (Gkintoni et al. 2025; Dong 2026).

This article is intended to be a guide for students who are trying to elevate their learning journey to be well prepared for a world in which AI is potentially increasingly replacing cognitive work and the bar we need to reach might be rising more and more with every release of more capable models.

The Double-Edged Sword of Cognitive Offloading: Beneficial vs. Detrimental Use

To truly master how you integrate AI into your computer science education, we need to dive into the learning science theory of cognitive offloading.

The Research: Cognitive offloading is using external tools to reduce your cognitive demand (Risko and Gilbert 2016). Examples of cognitive offloading include using a calculator to avoid having to do math in your head, setting a calendar reminder to avoid having to remember or constantly think about the deadline, or asking ChatGPT to debug a script. They enable you to get a task done with less cognitive work on your end, which of course sounds very enticing!

However, whether this offloading helps or harms your education depends entirely on what you are offloading. Educational psychologists analyze this through the lens of Cognitive Load Theory (CLT), which divides our mental effort into three categories: intrinsic load (the inherent, necessary difficulty of the core concepts you are trying to learn), extraneous load (unnecessary distractions or tedious tasks that don’t contribute to the core learning goal), and germane load (the mental effort that is directly contributing to learning and understanding) (Sweller et al. 2011; Kalyuga and Plass 2025).

Based on this framework, research categorizes AI cognitive offloading into two distinct paths:

The Bad: Detrimental Offloading (Outsourcing)

Detrimental offloading occurs when you use AI to bypass the intrinsic and germane cognitive effort required to build long-term knowledge schemas in your brain (Lodge and Loble 2026). In computer science, this looks like asking an AI to “write a Python script to solve the traveling salesperson problem” when the entire point of the assignment is for you to learn algorithmic optimization.

When you outsource the intrinsic and/or germane load, you suffer several severe consequences:

-

Bypassing Schema Construction: By letting the AI generate the logic, you skip the “desirable difficulties” necessary to move knowledge from your limited working memory into your long-term procedural memory (de Bruin et al. 2023; Duplice 2025). A massive randomized experiment of nearly a thousand students using AI to solve math problems found that while their immediate performance was significantly higher, their long-term, durable learning suffered significantly once the AI was removed because they never built the internal neural pathways to solve the problems themselves (Bastani et al. 2025). A smaller study conducted by Anthropic researchers shows similar results for coding tasks as well (Shen and Tamkin 2026). However, both studies find that these negative learning effects can be fully mitigated by using different usage styles for AI (more on this later).

-

Metacognitive Laziness: The frictionless convenience of GenAI powerfully incentivizes “metacognitive laziness”—a state where learners willingly abdicate their self-regulatory responsibilities, such as planning an approach, monitoring their own comprehension, and critically evaluating their work, simply handing those executive functions over to the machine (Fan et al. 2025; Yan et al. 2025).

The Good: Beneficial Offloading

Conversely, AI can be a massive catalyst for learning if used for beneficial offloading. This occurs when you deliberately delegate extraneous cognitive load to the AI, purposefully freeing up your limited working memory to focus entirely on the intrinsic, high-value work of learning (Lodge and Loble 2026; Gkintoni et al. 2025).

In a recent 12-week quasi-experimental study, researchers explicitly taught university students a “cognitive offload instruction” model. They instructed students to delegate lower-order tasks (like brainstorming basic ideas or checking grammar/syntax) to generative AI, thereby compelling the students to focus their mental energy on higher-order analysis, structural evaluation, and logical coherence. The students who practiced this targeted, beneficial offloading demonstrated significantly greater gains in critical thinking and produced higher-quality work than the control group (Hong et al. 2025). Similarly, studies show that when AI is used to offload lower-order tasks while students engage in shared metacognitive reflection, academic achievement is significantly enhanced (Iqbal et al. 2025).

Mastering the Interaction: Strategies for Deep Learning

While offloading boilerplate is useful, the real value of AI lies in its ability to act as a sophisticated cognitive scaffold. However, how you interact with that scaffold determines whether your skills grow or wither.

1. The “Attempt First” Pattern (Brain-to-LLM)

The Research Grounding: Recent neuroimaging studies and behavioral data confirm that students must grapple with a problem independently before engaging AI to achieve durable knowledge retention. The “Brain-to-LLM” sequence—where a learner attempts a task manually before using an LLM for revision—results in significantly higher brain network connectivity and superior unassisted recall compared to relying on AI from the start (Kosmyna et al. 2025). This leverages the Generation Effect: information we generate ourselves is remembered far better than information we passively consume (Slamecka and Graf 1978).

How and Why it Works: By forcing yourself into a “struggle protocol” for at least 15–20 minutes, you prime your brain’s retrieval pathways. Even if you fail, the mental effort creates “hooks” for the AI’s later explanation to latch onto. Without this initial struggle, you risk the “illusion of competence”—believing you understand a concept simply because you’ve seen a clear AI-generated solution (Kazemitabaar and et al. 2025).

Example Prompt (C++):

“I am trying to implement a Graph Breadth-First Search (BFS) in C++. I spent 20 minutes manually tracing my logic and writing this partial attempt: [paste code]. It is currently resulting in an infinite loop. Without rewriting the code for me, can you point out the conceptual flaw in how I am marking nodes as ‘visited’ in my queue?”

2. Socratic Interaction: AI as a Tutor, Not an Oracle

The Research Grounding: Assigning the LLM the role of an “intelligent tutor” produces significantly larger gains in academic achievement and critical thinking than using it as a passive “learning tool” (Huang et al. 2025; Kazemitabaar et al. 2025). This strategy relies on the Testing Effect (Retrieval Practice): the act of retrieving information from memory strengthens learning more than re-reading or seeing an answer (Bjork and Bjork 2020).

How and Why it Works: Instead of dispensing answers, a Socratic tutor enforces “beneficial friction.” It forces you into a “think–articulate–reflect” loop, requiring you to explain your reasoning before receiving feedback (Kazemitabaar and et al. 2025). This transforms a transactional exchange into a cognitively demanding learning process.

Example Prompt (Python):

“You are a Socratic Python tutor. I am having trouble understanding how list comprehensions work when using multiple ‘if’ conditions. Do not give me the syntax or a solved example for now. Instead, ask me 2–3 probing questions to help me break down the logic of how filters are applied in sequence, and wait for my response to each.”

3. The “Teach-Back” Method (AI as a Teachable Novice)

The Research Grounding: This strategy is rooted in the Protégé Effect—the phenomenon where students learn better by teaching others than by studying for themselves (Tomisu et al. 2025). In this “Cognitive Mirror” framework, the AI acts as a “teachable novice” with a pedagogically useful deficit, forcing the learner to engage in the effortful act of explanation (Tomisu et al. 2025).

How and Why it Works: Explaining a concept to a “confused” AI forces you to fill gaps in your own understanding, define jargon precisely, and monitor your comprehension (Tomisu et al. 2025). Generating these explanations is a “Constructive” activity in the ICAP framework, leading to superior learning outcomes (Chi and Wylie 2014).

Example Prompt (Python):

“Pretend you are a first-year CS student who doesn’t understand how object-oriented inheritance works in Python. I am going to explain it to you. Ask me ‘why’ and ‘how’ questions whenever my explanation is unclear, uses jargon without defining it, or skips a step. Point out any logical gaps and don’t accept hand-waving—if I say ‘it inherits methods,’ ask me to explain what that means precisely.”

Strategic Prompting Frameworks

Mastering the form of your prompt is just as important as the content. Two research-backed frameworks help ensure you are engineering for learning, not just output.

1. The Pedagogical Prompt Framework

The Research Grounding: The Knowledge-Learning-Instruction (KLI) framework suggests that different types of knowledge require specific instructional methods (Xiao et al. 2024). Instead of a simple query, pedagogical prompting uses a structured approach to elicit learning-oriented responses.

How and Why it Works: A well-specified pedagogical prompt includes five key components: the AI’s Role (e.g., Socratic Tutor), the Learner’s Level (e.g., Intro CS), the Problem Context, a Challenge Articulation, and strict Guardrails (Xiao et al. 2024). This prevents the AI from defaulting to “answer mode” and ensures the scaffolding matches your actual needs.

Example Prompt (C++):

“Act as an Intro-level C++ tutor. I am a beginner student struggling with pointer arithmetic. Specifically, I don’t understand how adding 1 to an integer pointer changes its address by 4 bytes. Guardrail: Do not provide the direct mathematical formula. Instead, provide a step-by-step worked example using an array of 5 integers and ask me to predict the address of the third element.”

2. Requirement-Oriented Prompt Engineering (ROPE)

The Research Grounding: ROPE shifts your effort away from low-level syntax recall and toward Computational Thinking and Requirement Specification (Denny et al. 2024). This is akin to the core “requirement elicitation” step in professional software engineering.

How and Why it Works: In “Prompt Problems,” your task is to analyze a complex problem and formulate a precise natural language prompt that guides the AI to generate the correct code (Denny et al. 2024). This forces you to engage in high-level abstraction and logical decomposition—the hardest and most valuable parts of programming—while the AI handles the syntax.

Example Prompt (Python):

(Student task) “Write a Python function

process_datathat takes a pandas DataFrame. Requirement 1: Drop all rows where the ‘Status’ column is NaN. Requirement 2: Group the data by ‘Department’ and calculate the mean of the ‘Salary’ column. Requirement 3: Return the resulting Series sorted in descending order. Requirement 4: Do not use any loop structures.”

“Learning results from what the student does and thinks and only from what the student does and thinks. The teacher can advance learning only by influencing what the student does to learn”

Use AI for:

- Personalized Feedback: (Vorobyeva et al. 2025)

- Adaptive Scaffolding:

- Simulating Worked Examples:

- Generative AI should be utilized as a “bicycle for the mind”—a tool that amplifies your cognitive reach but still requires your active control, steering, and judgment

High-Friction Study Patterns

To move from “passive consumer” to “active builder,” you need study patterns that introduce desirable difficulties—friction that feels hard in the moment but results in better long-term retention.

1. The Alternative Approaches Pattern

The Research Grounding: Comparing, contrasting, and critiquing diverse solutions is a higher-order cognitive task that develops relational understanding (Garcia 2025). Seeking multiple perspectives prevents “mental fixation” on a single, potentially sub-optimal solution.

How and Why it Works: Prompting the AI to generate multiple algorithms for the same problem forces you into an evaluative role. You learn to analyze trade-offs in time complexity, space complexity, and code readability (Garcia 2025).

Example Prompt (C++):

“Show me three different methods for reversing a string in-place in C++ (e.g., using a standard library algorithm, a two-pointer approach, and recursion). Do not just give me the code—provide a detailed comparison of their Big-O complexities and explain when each would be the ‘best’ choice in a production environment.”

2. Parsons Problems & Explain in Plain English (EiPE)

The Research Grounding: Grounded in Cognitive Load Theory, Parsons Problems (scrambled code blocks) and EiPE (writing natural language descriptions of code) target “relational” understanding (Denny et al. 2024; Smith et al. 2024). These techniques reduce the extraneous load of syntax while maximizing the “germane” load of logical structure (Ericson et al. 2017).

How and Why it Works: Parsons Problems remove the burden of environment setup and syntax errors, letting you focus entirely on control flow and program logic (Ericson et al. 2017). Similarly, the “Explain in Plain English” rule proves whether you understand the code or are just recognizing patterns.

Example Prompt (Python):

“Create a Python Parsons problem for implementing a Binary Search. Write a correct solution (about 10 lines). Present the lines to me in SCRAMBLED order, numbered randomly. Include 2 ‘distractor’ lines that look plausible but are logically incorrect. Do NOT show me the correct solution.”

The “Generation-Then-Comprehension” Protocol

If you do use AI to generate a snippet of code because you are completely stuck, you must never blindly copy-paste it. Research shows that developers who simply delegate code generation to AI completely bypass the skill formation process (Shen and Tamkin 2026). However, high-performing students naturally adopt a “Generation-Then-Comprehension” or “Hybrid Code-Explanation” workflow (Shen and Tamkin 2026). In this pattern, learners generate a piece of code and immediately follow up by prompting the AI for conceptual explanations of the underlying logic, ensuring they check and verify their own understanding rather than merely delegating the work.

Actionable Tips:

- Explain After AI: Adopt a strict personal rule. If AI writes five lines of code, you must immediately read it, trace the variables manually, and then explain it back to the AI line-by-line. Correct the AI if its explanation differs from your mental model.

- The 15-Minute Rule: Mandate a “struggle protocol”—attempt the problem independently for at least 15 minutes, write down what you tried, and then ask AI for a hint, not a solution (Bjork and Bjork 2011; Bjork and Bjork 2020).

Fading the Scaffold: The Goal is Independence

The final and most important principle is Fading Scaffolding. Following the Expertise Reversal Effect, heavy AI assistance is incredibly helpful early on, but it must be systematically withdrawn as your competence grows (Kalyuga et al. 2003; Kalyuga and Plass 2025).

As you master a concept, stop asking the AI for boilerplate; start asking it only for high-level architectural critiques. If you find yourself unable to solve a problem without an LLM that you could solve easily two months ago, you have over-indexed on offloading. The ultimate mark of successful AI use is that you eventually need the AI less for that specific skill, not more.

Summary: AI is a skill amplifier. Use it to increase the “desirable difficulty” of your studies, not to remove the effort. Master the interaction patterns that force you to think, articulate, and reflect. Your goal isn’t just to finish the assignment; it’s to build a brain that can eventually build the AI itself.

AI & Learning Quiz

Recalling what you just learned is the best way to form lasting memory. Use this quiz to test your understanding.

What is ‘cognitive offloading’?

According to research, who benefits more from using AI for technical tasks?

Which of these is an example of ‘Beneficial Offloading’ for a CS student?

What is the ‘desirable difficulty’ in the context of learning?

References

- (Bastani et al. 2025): Hamsa Bastani, Osbert Bastani, Alp Sungu, Haosen Ge, Özge Kabakcı, and Rei Mariman (2025) “Generative AI without guardrails can harm learning: Evidence from high school mathematics,” Proceedings of the National Academy of Sciences, 122(26), p. e2422633122.

- (Bjork and Bjork 2011): Elizabeth Ligon Bjork and Robert A. Bjork (2011) “Making things hard on yourself, but in a good way: Creating desirable difficulties to enhance learning,” in M. A. Gernsbacher, R. W. Pew, L. M. Hough, and J. R. Pomerantz (eds.) Psychology and the Real World: Essays Illustrating Fundamental Contributions to Society. New York, NY: Worth Publishers, pp. 56–64.

- (Bjork and Bjork 2020): Robert A. Bjork and Elizabeth Ligon Bjork (2020) “Desirable difficulties in theory and practice,” Journal of Applied Research in Memory and Cognition, 9(4), pp. 475–479.

- (Brown et al. 2014): Peter C. Brown, Henry L. Roediger III, and Mark A. McDaniel (2014) Make It Stick: The Science of Successful Learning. Cambridge, MA: Belknap Press of Harvard University Press.

- (Chi and Wylie 2014): Michelene T. H. Chi and Ruth Wylie (2014) “The ICAP Framework: Linking Cognitive Engagement to Active Learning Outcomes,” Educational Psychologist, 49(4), pp. 219–243.

- (DORA 2025): DORA (2025) “State of AI-assisted Software Development 2025.” Google Cloud / DORA.

- (Denny et al. 2024): Paul Denny, Juho Leinonen, James Prather, Andrew Luxton-Reilly, and Thezyrie Amarouche (2024) “Prompt Problems: A New Programming Exercise for the Generative AI Era,” Proceedings of the 55th ACM Technical Symposium on Computer Science Education (SIGCSE).

- (Dohmke 2025): Thomas Dohmke (2025) “Developers, Reinvented.”

- (Dong 2026): Ying Dong (2026) “Generative AI technologies and educational outcomes: a comprehensive meta-analysis comparing traditional and AI-driven approaches,” Humanities and Social Sciences Communications.

- (Duplice 2025): John J. Duplice (2025) “Generation and L2 vocabulary learning: A classroom action study on the efficacy of the generation desirable difficulty in learning L2 vocabulary,” Vocabulary Learning and Instruction, 14(1), pp. Article 102482.

- (Ericson et al. 2017): Barbara J. Ericson, Paul Denny, Arto Hellas, and Juho Leinonen (2017) “Solving Parsons Problems versus Fixing and Writing Code,” Proceedings of the 17th Koli Calling International Conference on Computing Education Research.

- (Fan et al. 2025): Yizhou Fan, Luzhen Tang, Huixiao Le, Kejie Shen, Shufang Tan, Yueying Zhao, Yuan Shen, Xinyu Li, and Dragan Gašević (2025) “Beware of metacognitive laziness: Effects of generative artificial intelligence on learning motivation, processes, and performance,” British Journal of Educational Technology, 56, pp. 489–530.

- (Garcia 2025): Mark Anthony Garcia (2025) “Teaching and learning computer programming using ChatGPT: A rapid review of literature amid the rise of generative AI technologies,” Education and Information Technologies, 30, pp. 16721–16745.

- (Gkintoni et al. 2025): Evgenia Gkintoni, Hera Antonopoulou, Andrew Sortwell, and Constantinos Halkiopoulos (2025) “Challenging Cognitive Load Theory: The Role of Educational Neuroscience and Artificial Intelligence in Redefining Learning Efficacy,” Brain Sciences, 15, p. 203.

- (Hong et al. 2025): Hui Hong, Poonsri Vate-U-Lan, and Chantana Viriyavejakul (2025) “Cognitive Offload Instruction with Generative AI: A Quasi-Experimental Study on Critical Thinking Gains in English Writing,” Forum for Linguistic Studies, 7(7), pp. 325–334.

- (Huang et al. 2025): Ruanqianqian Huang, Avery Moreno Reyna, Sorin Lerner, Haijun Xia, and Brian Hempel (2025) “Professional Software Developers Don’t Vibe, They Control: AI Agent Use for Coding in 2025,” arXiv preprint arXiv:2512.14012.

- (Iqbal et al. 2025): Javed Iqbal, Zarqa Farooq Hashmi, Muhammad Zaheer Asghar, and Muhammad Naseem Abid (2025) “Generative AI tool use enhances academic achievement in sustainable education through shared metacognition and cognitive offloading among preservice teachers,” Scientific Reports, 15(1), pp. Article 16610.

- (Kalyuga et al. 2003): Slava Kalyuga, Paul Ayres, Paul Chandler, and John Sweller (2003) “The Expertise Reversal Effect,” Educational Psychologist, 38(1), pp. 23–31.

- (Kalyuga and Plass 2025): Slava Kalyuga and Jan L. Plass (2025) Rethinking cognitive load theory. Oxford University Press.

- (Kazemitabaar et al. 2025): Majeed Kazemitabaar, Oliver Huang, Sangho Suh, Austin Z Henley, and Tovi Grossman (2025) “Exploring the Design Space of Cognitive Engagement Techniques with AI-Generated Code for Enhanced Learning,” International Conference on Intelligent User Interfaces (IUI). New York, NY, USA, pp. 695–714.

- (Kazemitabaar and et al. 2025): Majeed Kazemitabaar and et al. (2025) “SocraticAI: Socratic Tutoring for Programming Education with Generative AI,” Proceedings of the ACM Conference on Computing Education.

- (Kosmyna et al. 2025): Nataliya Kosmyna, Eugene Hauptmann, Ye Tong Yuan, Jessica Situ, Xian-Hao Liao, Ashly Vivian Beresnitzky, Iris Braunstein, and Pattie Maes (2025) Your Brain on ChatGPT: Accumulation of Cognitive Debt when Using an AI Assistant for Essay Writing Task. MIT Media Lab.

- (Lodge and Loble 2026): Jason M. Lodge and Leslie Loble (2026) Artificial intelligence, cognitive offloading and implications for education. University of Technology Sydney.

- (Ma et al. 2026): Qianou Ma, Kenneth Koedinger, and Tongshuang Wu (2026) “Not Everyone Wins with LLMs: Behavioral Patterns and Pedagogical Implications for AI Literacy in Programmatic Data Science,” CHI Conference on Human Factors in Computing Systems (CHI).

- (Paradis et al. 2025): Elise Paradis, Kate Grey, Quinn Madison, Daye Nam, Andrew Macvean, Vahid Meimand, Nan Zhang, Ben Ferrari-Church, and Satish Chandra (2025) “How Much Does AI Impact Development Speed? An Enterprise-Based Randomized Controlled Trial,” International Conference on Software Engineering: Software Engineering in Practice (ICSE-SEIP).

- (Prather et al. 2024): James Prather, Brent N Reeves, Juho Leinonen, Stephen MacNeil, Arisoa S Randrianasolo, Brett A. Becker, Bailey Kimmel, Jared Wright, and Ben Briggs (2024) “The Widening Gap: The Benefits and Harms of Generative AI for Novice Programmers,” Conference on International Computing Education Research (ICER), pp. 469–486.

- (Risko and Gilbert 2016): Evan F. Risko and Sam J. Gilbert (2016) “Cognitive offloading,” Trends in Cognitive Sciences, 20(9), pp. 676–688.

- (Shen and Tamkin 2026): Judy Hanwen Shen and Alex Tamkin (2026) “How AI Impacts Skill Formation.”

- (Slamecka and Graf 1978): Norman J. Slamecka and Peter Graf (1978) “The Generation Effect: Delineation of a Phenomenon,” Journal of Experimental Psychology: Human Learning and Memory, 4(6), pp. 592–604.

- (Smith et al. 2024): Samantha Boatright Smith, J. D. Zamfirescu-Pereira, Abigail O’Neill, John DeNero, and Narges Norouzi (2024) “Code Generation Based Grading: Evaluating an Auto-grading Mechanism for Explain-in-Plain-English Questions,” Proceedings of the 2024 ACM Conference on International Computing Education Research.

- (Sweller et al. 2011): John Sweller, Paul Ayres, and Slava Kalyuga (2011) Cognitive load theory. Springer.

- (Tomisu et al. 2025): Hayato Tomisu, Junya Ueda, and Tsukasa Yamanaka (2025) “The Cognitive Mirror: A Framework for AI-Powered Metacognition and Self-Regulated Learning,” Frontiers in Education, 10, p. 1697554.

- (Vorobyeva et al. 2025): Klarisa I. Vorobyeva, Svetlana Belous, Natalia V. Savchenko, Lyudmila M. Smirnova, Svetlana A. Nikitina, and Sergei P. Zhdanov (2025) “Personalized learning through AI: Pedagogical approaches and critical insights,” Contemporary Educational Technology, 17(2), p. ep574.

- (Xiao et al. 2024): R. Xiao, X. Hou, R. Ye, M. Kazemitabaar, N. Diana, M. Liut, and J. Stamper (2024) “Improving Student-AI Interaction Through Pedagogical Prompting: An Example in Computer Science Education,” Proceedings of the ACM Conference on Learning @ Scale.

- (Yan et al. 2025): Lixiang Yan, Samuel Greiff, Jason M. Lodge, and Dragan Gašević (2025) “Distinguishing performance gains from learning when using generative AI,” Nature Reviews Psychology, 4(7), pp. 435–436.

- (Yan et al. 2024): Lixiang Yan, Samuel Greiff, Ziwen Teuber, and Dragan Gašević (2024) “Promises and challenges of generative artificial intelligence for human learning,” Nature Human Behaviour, 8(10), pp. 1839–1850.

- (de Bruin et al. 2023): Anique B. H. de Bruin, Felicitas Biwer, Luotong Hui, Erdem Onan, Louise David, and Wisnu Wiradhany (2023) “Worth the effort: The Start and Stick to Desirable Difficulties (S2D2) framework,” Educational Psychology Review, 35(2), pp. Article 41.